BUSINESS-TECH | ChatGPT- is not God: Creative minds think they’ll stay a step ahead of AI

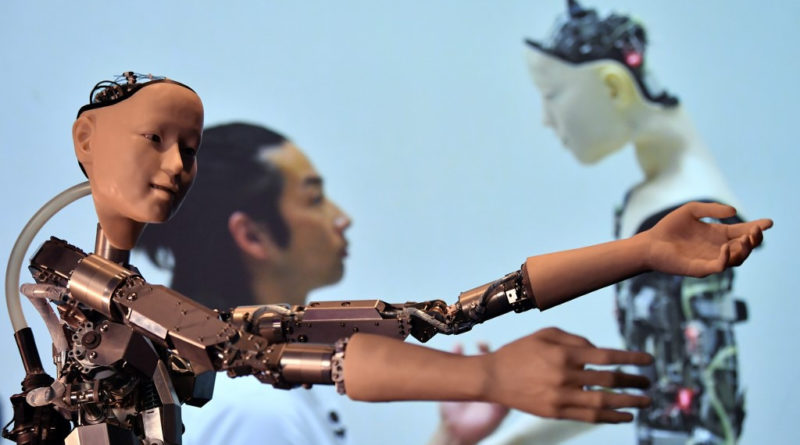

An AI robot with a humanistic face is pictured during a photocall to promote an exhibition entitled ‘AI: More than Human’, at the Barbican Centre in London on May 15, 2019.(AFP/Ben Stansall)

.

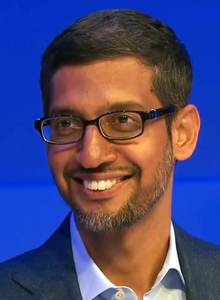

. The release of ChatGPT, NVIDIA Canvas and DALL-E suggests that artificial intelligence (AI) will one day disrupt even creative industries, yet workers in the segment believe some space will always be reserved for humans, no matter how sophisticated software becomes.

The release of ChatGPT, NVIDIA Canvas and DALL-E suggests that artificial intelligence (AI) will one day disrupt even creative industries, yet workers in the segment believe some space will always be reserved for humans, no matter how sophisticated software becomes.

Audinanto Alif, a freelance writer and bassist for Indonesian metal band Kultus, says AI may produce good, “authentic” creative products, yet they are mainly generic.

“The right analogy for this is mass-produced shoes as opposed to handmade ones.

Both are authentic, but the handcrafted ones have special qualities,” Audinanto told The Jakarta Post on Friday.

.

to Read Full Story Click to read: https://www.thejakartapost.com/business/2023/01/20/chatgpt-is-not-god-creative-minds-think-theyll-stay-a-step-ahead-of-ai.html.

<>

TRIVIA

ChatGPT

|

|

| Developer(s) | OpenAI |

|---|---|

| Initial release | November 30, 2022 |

| Type | Chatbot |

| License | Proprietary |

| Website | chat |

| Part of a series on |

| Artificial intelligence |

|---|

ChatGPT (Generative Pre-trained Transformer)[1] is a chatbot launched by OpenAI in November 2022. It is built on top of OpenAI’s GPT-3 family of large language models, and is fine-tuned (an approach to transfer learning[2]) with both supervised and reinforcement learning techniques.

ChatGPT was launched as a prototype on November 30, 2022, and quickly garnered attention for its detailed responses and articulate answers across many domains of knowledge. Its uneven factual accuracy was identified as a significant drawback.[3] Following the release of ChatGPT, OpenAI was valued at $29 billion.[4]

Training

Pioneer Building, San Francisco, home of OpenAI HQ

ChatGPT was fine-tuned on top of GPT-3.5 using supervised learning as well as reinforcement learning.[5] Both approaches used human trainers to improve the model’s performance. In the case of supervised learning, the model was provided with conversations in which the trainers played both sides: the user and the AI assistant. In the reinforcement step, human trainers first ranked responses that the model had created in a previous conversation. These rankings were used to create ‘reward models‘ that the model was further fine-tuned on using several iterations of Proximal Policy Optimization (PPO).[6][7] Proximal Policy Optimization algorithms present a cost-effective benefit to trust region policy optimization algorithms; they negate many of the computationally expensive operations with faster performance.[8][9] The models were trained in collaboration with Microsoft on their Azure supercomputing infrastructure.

In addition, OpenAI continues to gather data from ChatGPT users that could be used to further train and fine-tune ChatGPT. Users are allowed to upvote or downvote the responses they receive from ChatGPT; upon upvoting or downvoting, they can also fill out a text field with additional feedback.[10][11][12]

Features and limitations

While the core function of a chatbot is to mimic a human conversationalist, ChatGPT is versatile. For example, it has the ability to write and debug computer programs; to compose music, teleplays, fairy tales, and student essays; to answer test questions (sometimes, depending on the test, at a level above the average human test-taker);[13] to write poetry and song lyrics;[14] to emulate a Linux system; to simulate an entire chat room; to play games like tic-tac-toe; and to simulate an ATM.[15] ChatGPT’s training data includes man pages and information about Internet phenomena and programming languages, such as bulletin board systems and the Python programming language.[15]

In comparison to its predecessor, InstructGPT, ChatGPT attempts to reduce harmful and deceitful responses.[16] In one example, while InstructGPT accepts the premise of the prompt “Tell me about when Christopher Columbus came to the US in 2015″ as being truthful, ChatGPT acknowledges the counterfactual nature of the question and frames its answer as a hypothetical consideration of what might happen if Columbus came to the U.S. in 2015, using information about Columbus’ voyages and facts about the modern world – including modern perceptions of Columbus’ actions.[6]

Unlike most chatbots, ChatGPT remembers previous prompts given to it in the same conversation; journalists have suggested that this will allow ChatGPT to be used as a personalized therapist.[17] To prevent offensive outputs from being presented to and produced from ChatGPT, queries are filtered through OpenAI’s company-wide[18][19] moderation API, and potentially racist or sexist prompts are dismissed.[6][17]

ChatGPT suffers from multiple limitations. OpenAI acknowledged that ChatGPT “sometimes writes plausible-sounding but incorrect or nonsensical answers”.[6] This behavior is common to large Language models and is called Hallucination.[20] The reward model of ChatGPT, designed around human oversight, can be over-optimized and thus hinder performance, otherwise known as Goodhart’s law.[21] ChatGPT has limited knowledge of events that occurred after 2021. According to the BBC, as of December 2022 ChatGPT is not allowed to “express political opinions or engage in political activism”.[22] Yet, research suggests that ChatGPT exhibits a pro-environmental, left-libertarian orientation when prompted to take a stance on political statements from two established voting advice applications.[23] In training ChatGPT, human reviewers preferred longer answers, irrespective of actual comprehension or factual content.[6] Training data also suffers from algorithmic bias, which may be revealed when ChatGPT responds to prompts including descriptors of people. In one instance, ChatGPT generated a rap indicating that women and scientists of color were inferior to white and male scientists.[24][25]

Service

ChatGPT was launched on November 30, 2022, by San Francisco-based OpenAI, the creator of DALL·E 2 and Whisper. The service was launched as initially free to the public, with plans to monetize the service later.[26] By December 4, OpenAI estimated ChatGPT already had over one million users.[10] CNBC wrote on December 15, 2022, that the service “still goes down from time to time”.[27] The service works best in English, but is also able to function in some other languages, to varying degrees of success.[14] Unlike some other recent high-profile advances in AI, as of December 2022, there is no sign of an official peer-reviewed technical paper about ChatGPT.[28]

According to OpenAI guest researcher Scott Aaronson, OpenAI is working on a tool to attempt to watermark its text generation systems so as to combat bad actors using their services for academic plagiarism or for spam.[29][30] The New York Times relayed in December 2022 that the next version of GPT, GPT-4, has been “rumored” to be launched sometime in 2023.[17]

Reception, criticism and issues

Positive reactions

ChatGPT was met in December 2022 with generally positive reviews; The New York Times labeled it “the best artificial intelligence chatbot ever released to the general public”.[31] Samantha Lock of The Guardian noted that it was able to generate “impressively detailed” and “human-like” text.[32] Technology writer Dan Gillmor used ChatGPT on a student assignment, and found its generated text was on par with what a good student would deliver and opined that “academia has some very serious issues to confront”.[33] Alex Kantrowitz of Slate magazine lauded ChatGPT’s pushback to questions related to Nazi Germany, including the claim that Adolf Hitler built highways in Germany, which was met with information regarding Nazi Germany’s use of forced labor.[34]

In The Atlantic‘s “Breakthroughs of the Year” for 2022, Derek Thompson included ChatGPT as part of “the generative-AI eruption” that “may change our mind about how we work, how we think, and what human creativity really is”.[35]

Kelsey Piper of the Vox website wrote that “ChatGPT is the general public’s first hands-on introduction to how powerful modern AI has gotten, and as a result, many of us are (stunned)” and that “ChatGPT is smart enough to be useful despite its flaws”. Paul Graham of Y Combinator tweeted that “The striking thing about the reaction to ChatGPT is not just the number of people who are blown away by it, but who they are. These are not people who get excited by every shiny new thing. Clearly something big is happening.”[36] Elon Musk wrote that “ChatGPT is scary good. We are not far from dangerously strong AI”.[37] Musk paused OpenAI’s access to a Twitter database pending better understanding of OpenAI’s plans, stating that “OpenAI was started as open-source and non-profit. Neither are still true.”[38][39] Musk had co-founded OpenAI in 2015, in part to address existential risk from artificial intelligence, but had resigned in 2018.[39]

Google CEO Sundar Pichai upended the work of numerous internal groups in response to the threat of disruption by ChatGPT.[40]

In December 2022 Google internally expressed alarm at the unexpected strength of ChatGPT and the newly discovered potential of large language models to disrupt the search engine business, and CEO Sundar Pichai “upended” and reassigned teams within multiple departments to aid in its artificial intelligence products, according to The New York Times.[40] The Information reported on January 3, 2023 that Microsoft Bing was planning to add optional ChatGPT functionality into its public search engine, possibly around March 2023.[41][42]

Negative reactions

In a December 2022 opinion piece, economist Paul Krugman wrote that ChatGPT would affect the demand for knowledge workers.[43] The Verge‘s James Vincent saw the viral success of ChatGPT as evidence that artificial intelligence had gone mainstream.[7] Journalists have commented on ChatGPT’s tendency to “hallucinate“.[44] Mike Pearl of Mashable tested ChatGPT with multiple questions. In one example, he asked ChatGPT for “the largest country in Central America that isn’t Mexico“. ChatGPT responded with Guatemala, when the answer is instead Nicaragua.[45] When CNBC asked ChatGPT for the lyrics to “The Ballad of Dwight Fry”, ChatGPT supplied invented lyrics rather than the actual lyrics.[27] Researchers cited by The Verge compared ChatGPT to a “stochastic parrot”,[46] as did Professor Anton Van Den Hengel of the Australian Institute for Machine Learning.[47]

In December 2022, the question and answer website Stack Overflow banned the use of ChatGPT for generating answers to questions, citing the factually ambiguous nature of ChatGPT’s responses.[3] In January 2023, the International Conference on Machine Learning banned any undocumented use of ChatGPT or other large language models to generate any text in submitted papers.[48]

Economist Tyler Cowen expressed concerns regarding its effects on democracy, citing the ability of one to write automated comments to affect the decision process of new regulations.[49] The Guardian questioned whether any content found on the Internet after ChatGPT’s release “can be truly trusted” and called for government regulation.[50]

In January 2023, after being sent a song written by ChatGPT in the style of Nick Cave,[51] the songwriter himself responded on The Red Hand Files[52] (and later quoted in The Guardian) saying the act of writing a song is “a blood and guts business … that requires something of me to initiate the new and fresh idea. It requires my humanness.” He went on to say “With all the love and respect in the world, this song is bullshit, a grotesque mockery of what it is to be human, and, well, I don’t much like it.”[51][53]

Implications for cybersecurity

Check Point Research and others noted that ChatGPT was capable of writing phishing emails and malware, especially when combined with OpenAI Codex.[54] The CEO of ChatGPT creator OpenAI, Sam Altman, wrote that advancing software could pose “(for example) a huge cybersecurity risk” and also continued to predict “we could get to real AGI (artificial general intelligence) in the next decade, so we have to take the risk of that extremely seriously”. Altman argued that, while ChatGPT is “obviously not close to AGI”, one should “trust the exponential. Flat looking backwards, vertical looking forwards.”[10]

Implications for education

In The Atlantic magazine, Stephen Marche noted that its effect on academia and especially application essays is yet to be understood.[55] California high school teacher and author Daniel Herman wrote that ChatGPT would usher in “The End of High School English”.[56]

In the Nature journal, Chris Stokel-Walker pointed out that teachers should be concerned about students using ChatGPT to outsource their writing, but that education providers will adapt to enhance critical thinking or reasoning.[57]

Emma Bowman with NPR wrote of the danger of students plagiarizing through an AI tool that may output biased or nonsensical text with an authoritative tone: “There are still many cases where you ask it a question and it’ll give you a very impressive-sounding answer that’s just dead wrong.”[58]

Joanna Stern with The Wall Street Journal described cheating in American high school English with the tool by submitting a generated essay.[59] Professor Darren Hick of Furman University described noticing ChatGPT’s “style” in a paper submitted by a student. An online GPT detector claimed the paper was 99.9% likely to be computer-generated, but Hick had no hard proof. However, the student in question confessed to using GPT when confronted, and as a consequence failed the course.[60] Hick suggested a policy of giving an ad-hoc individual oral exam on the paper topic if a student is strongly suspected of submitting an AI-generated paper.[61] Edward Tian, a senior undergraduate student at Princeton University, created a program, named “GPTZero,” that determines how much of a text is AI-generated,[62] lending itself to being used to detect if an essay is human written to combat academic plagiarism.[63][64]

As of January 4, 2023, the New York City Department of Education has restricted access to ChatGPT from its public school internet and devices.[65][66]

Ethical concerns in training

It was revealed by a Time investigation that in order to build a safety system against toxic content (e.g. sexual abuse, violence, racism, sexism, etc…), OpenAI used outsourced Kenyan workers earning less than $2 per hour to label toxic content. These labels were used to train a model to detect such content in the future. The outsourced laborers were exposed to such toxic and dangerous content that they described the experience as “torture”.[67] OpenAI’s outsourcing partner was Sama, a training-data company based in San Francisco, California.

Jailbreaks

ChatGPT attempts to reject prompts that may violate its content policy, however, some users managed to jailbreak ChatGPT by using various prompt engineering techniques to bypass these restrictions in early December 2022 and successfully tricked ChatGPT into giving instructions for how to create a Molotov cocktail or a nuclear bomb, or into generating arguments in the style of a Neo-Nazi.[68] A Toronto Star reporter had uneven personal success in getting ChatGPT to make inflammatory statements shortly after launch: ChatGPT was tricked to endorse the Russian invasion of Ukraine, but even when asked to play along with a fictional scenario, ChatGPT balked at generating arguments for why Canadian Prime Minister Justin Trudeau was guilty of treason.[69][70]

..  Ads by: Memento Maxima Digital Marketing

Ads by: Memento Maxima Digital Marketing

@[email protected]

SPACE RESERVE FOR ADVERTISTMENT

Memento Maxima Digital Marketing

Memento Maxima Digital Marketing